Our recent blog discussed Google’s latest algorithm update, nicknamed Medic. This change ensured that websites containing healthcare and medicine related content were held to a higher quality control standard than other websites. Since these websites gave advice on people’s health and their money, the consequences of false or misleading information are much higher. Therefore, Google reasoned, they’d have to try a lot harder to prove their content was worth the rankings.

For many, Medic will pass unnoticed. But it’s the latest stage in a long string of changes, enhancements and outright replacements to the core algorithm that got Google started in the late 90s. However unimportant individual changes may seem, all are geared towards enhancing the user experience; ensuring search results are high quality, well-written and well-researched.

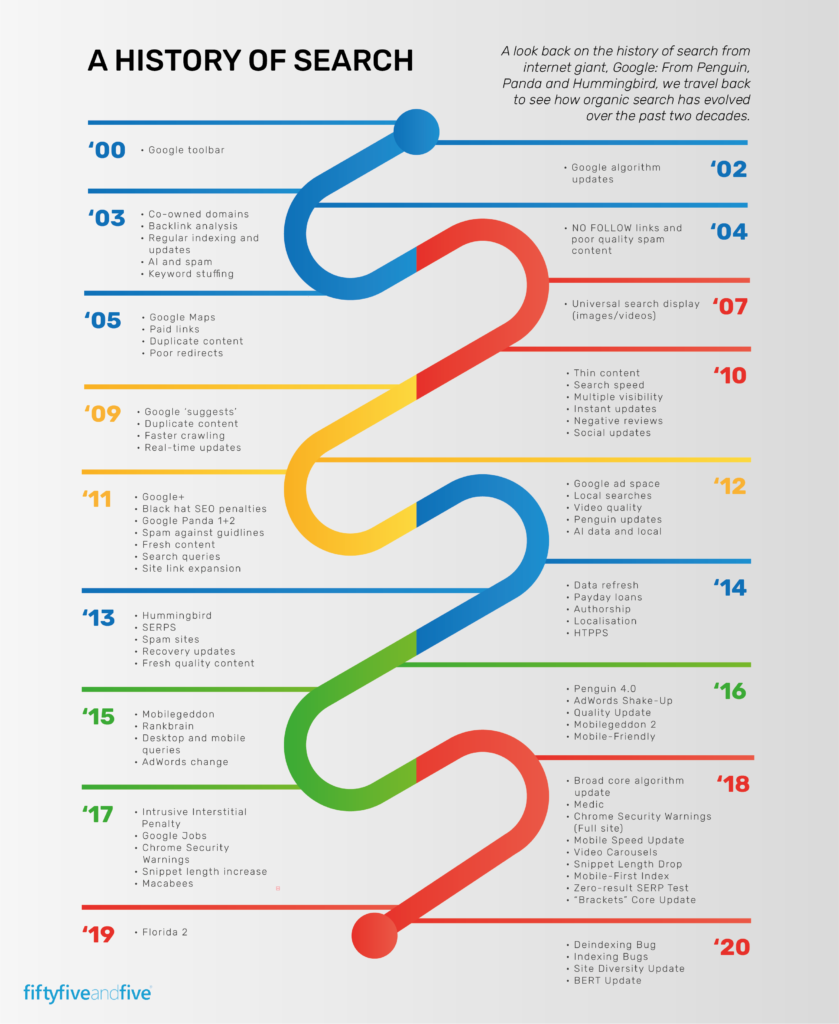

The continuing work of tweaking the Google algorithm has been underway since the early 2000s. Here’s our Google algorithm change timeline to give you an overview of the most important changes.

The war on keyword stuffing

By 2002, Google had been around for a little while, and people had started to work out how to game the competition. After a few small changes to the algorithm in 2002 and 2003, the end of the 2003 bought the first significant named update – Florida. It was designed to bring an end to some of the more spam-orientated SEO tactics (or ‘black hat’ as they came to be known), that had developed over the previous years.

This included hidden links, invisible text and most importantly, excessive keyword stuffing. Previously, websites overloaded with search terms would rank well, as Google assumed, they were more relevant to that query. Florida was the first step in the process that penalised websites stuffed with excessive keywords, forcing content creators to write quality, relevant content if they wanted to rank. That was the intention, anyway.

Ending content farms

For a little while, Florida was enough to keep the SEO world on their toes and curb the worst excesses of spam content. By 2011, however, the internet had moved on, and another Google algorithm update was needed: Panda. In the words of Google itself, Panda was designed to penalise content that was as close to being spam as possible, without actually being spam.

Over the past few years, certain types of websites had begun to crop up, which became known as ‘content farms’. As the name would suggest, they specialised in a certain type of ‘thin content’; low-value, spam-like content that gave little value to the user.

Panda actually had over 20 different versions, because the problem it looked to solve was more complex than one algorithm change could fix. At some point between the first and twentieth update, Panda began to turn the tide against these content farms, and today they’re a dated concept.

Similar updates from around that time also sought to deal with link malpractice. Since working out that hyperlinks contribute to SEO rankings, the internet had become awash with low-quality, irrelevant links – often in places where they’re not needed and overloaded with keyword optimised anchor text.

Before the Google algorithm update, the algorithm struggled to differentiate between quality and quantity of links. Afterwards, Google could better make the distinction, ensuring links that were natural, relevant and authoritative were rewarded.

Understanding semantic meaning

Until 2013, the updates to Google’s algorithms were just that – updates. There may been extras updates added and tweaks made, but the same basic methods that powered searches in the late 90s were still at work in 2013. By now, it was time for a real shake up.

Not all the problems Google sought to tackle were down to black hat SEO. Much of the time, improvements were made because the algorithm wasn’t clever enough to understand what the user wanted from the search. Much of the time, it would return results that matched on a word-for-word basis, rather than those which actually answered the customers’ queries.

Hummingbird was perhaps the most important algorithm change since Google launched, arriving in 2013. It allowed Google to begin understanding the user intent as well as the content in search queries.

Recently, we discussed how keywords were fast declining as a major SEO ranking factor, in favour of content that fully fulfils a query’s semantic intent. Hummingbird was the change that first heralded this.

Mobilegeddon

While Google had been busy eliminating dodgy links and content farms, the remaining outside world had fundamentally shifted the way they search on the internet. The days where sitting down at your computer and typing a query into Google was the default setting were fast declining. By 2015, mobile devices had become more common than web for internet searches. That year, Google sought to change results to better reflect this.

‘Mobilegeddon’ was the nickname given to an update that significantly penalised websites that weren’t mobile–optimized. If the website couldn’t be rearranged or reconfigured to suit the smaller screen, there’s a good chance that it would fall down the search rankings. Luckily, this only affected mobile searches, so website admins could feel safe that their websites would remain in the same place for web searches. This wasn’t to last long however. Since the introduction of mobile first indexing, mobile-optimised content performs better across searches on all devices.

Better searches, better results, better SEO

Most of the changes that Google have made to their algorithm since 2003 have been part of the ongoing cat and mouse game with black hat SEO tactics. By about 2014, they’d eliminated most of the loopholes in the algorithm, meaning the many updates over the past have diverted attention towards changes that make a real difference to the quality of search results. The Medic Google algorithm update is just one of these changes, a focused change that increases the quality of results in a small yet significant way.

There was a time when black hat SEO tactics were frowned upon. Nowadays, they simply don’t work. As with any algorithm, there probably still exists a way to game the system, but these days it’s simply more effort than it’s worth. Now, if website creators want to find themselves at the top of the SERP page, there’s one rule and one rule only that’ll get them there: Write good content.

As a specialist full-service digital marketing agency for Microsoft Partners, we know everything there is to know about creating content and getting SERP-topping rankings for IT companies. If you want to find out more, get in touch with the team.

Looking to improve your marketing?

We can help! Talk to us about your business and the specific barriers you come up against when trying to generate leads. Get in touch.